Introducing EchoSelf: AI-Powered Voice Notes for iPhone

Naveed Ahmad

Founder & CEO

A New Era in Idea Capture

Today I'm excited to introduce EchoSelf, a fundamentally new approach to capturing and organizing your thoughts on iPhone.

Built around a privacy-first architecture, EchoSelf performs speech recognition directly on your device, ensuring your most valuable asset—your ideas—remain under your control. This local-first approach also means you can capture thoughts anytime, anywhere, regardless of connectivity.

Beyond Simple Transcription

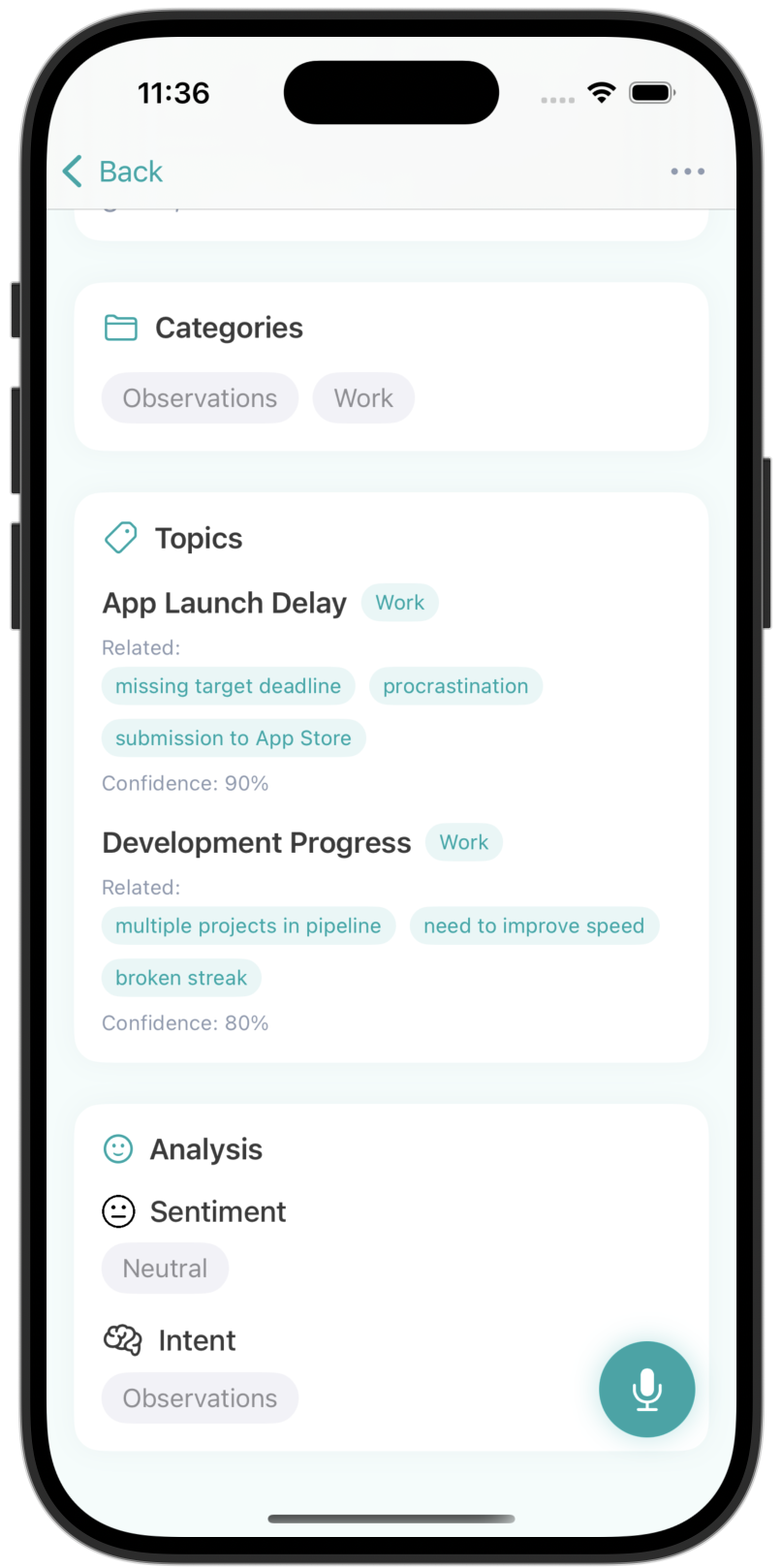

What sets EchoSelf apart is its cognitive understanding of your voice notes. Beyond mere transcription, the app analyzes content to automatically identify key topics, detect sentiment, and even recognize different types of notes—from task lists to creative brainstorms to meeting summaries.

The intelligent organization system eliminates the need for manual filing. Your notes are automatically categorized and made searchable not just by keywords, but by concepts and context. Ask for "ideas about the marketing project from last week," and EchoSelf understands exactly what you're looking for.

Designed for Speed and Focus

I've also reimagined the capture experience. A streamlined interface lets you start recording within a second of opening the app, and the visualization provides immediate feedback on audio quality and processing status.

For teams, EchoSelf offers selective sharing capabilities, allowing collaborative ideation while maintaining granular control over your personal thought library.

The Personal Journey

As an indie developer, I created EchoSelf to solve my own challenges with thought capture. After years of struggling with fragmented notes across different apps and services, I wanted something that would work the way our minds do—fluid, contextual, and always ready when inspiration strikes.

EchoSelf represents my vision for frictionless thought capture—where the technology disappears, leaving only your ideas, perfectly preserved and organized, ready when you need them.

Related Articles

March 5, 2025

February 28, 2025

February 20, 2025